Privacy is now front and center in technology.

Facebook, Apple, Huawei, Google, you name the brand and they’re appearing in one way or another in stories related to privacy and the concerns that publics, governments, and regulatory agencies have about protecting user privacy.

Of course, questions of privacy can’t be disconnected from the larger cybersecurity discussion, since without security data flows without any protections whatsoever. Though the general public is more aware now of this relationship than they have been in the past, humans are still human—and a fifth to a third of them will click or tap on anything put in front of them.

At the same time, cybersecurity technologies have created privacy concerns of their own, and these concerns are often multiplied as companies try to further increase security.

As Passwords Age, Privacy Is at Issue

In the beginning, we all used passwords. And they failed miserably. Passwords revealed themselves to be:

- Easily vulnerable to brute-force attacks

- Easily lost or stolen

- Vulnerable to poor selection that made them easy to guess

-

Generally inadequate as a method for protecting data and thus, privacy

For these reasons, the information world has been moving toward personal shared secrets and new forms of multi-factor authentication (MFA) for years.

Personal shared secrets, are words or sentences that are—in theory—memorized and known only by the user and the authenticating system, just like passwords. Unlike passwords, they use real-world biographical data for easier memorization, better brute-force protection, and to avoid the shortcuts (such as “1234” or “password”) that users often take in password selection.

Multi-factor authentication moves beyond a reliance on what the user knows to requiring them to present an identifying characteristic that they have (like a token fob or a mobile phone) or that they embody (like a fingerprint or their face).

These sound like reasonable solutions to the problem of aging password technology. Unfortunately, both of them also come with problems—often, privacy problems—of their own.

Personal Shared Secrets

Secret questions or biographical data are hard to brute-force, and because users are more likely to remember them outright, they’re less likely to be written down—and thus less likely to be lost or stolen.

Unfortunately, they create entirely new privacy problems in their own right. Consider some common examples of these sorts of prompts:

- What is your favorite kind of food?

- Who was the best man at your wedding?

- What’s the name of the street you lived on as a child?

- At which of the following addresses have you previously lived?

-

At which of these banks have you previously had an account?

Using private data to protect private data is a strangely circular approach security, yet it’s one of the most common today. In order to protect their private data, users are asked to provide—a considerable amount of increasingly private data, often very personal in nature.

This isn’t just paradoxical, it’s also risky—biographical details can be discovered by motivated research, and the more these details are stored used for authentication, the more they’ll be discoverable as breaches continue to leak these stored details into the wild.

The verdict on enhanced shared secrets? Privacy fail.

Mobile Apps and SMS Codes

These are everywhere and proliferating rapidly as the MFA solution of choice these days. There’s a good reason for this. Users already have and carry mobile phones with them wherever they go. They’re highly prized possessions.

Mobile MFA? Privacy fail.

Mobile MFA? Privacy fail.That’s great in a way—it means that users are likely to habitually have their phones with them, eliminating “I forgot my authenticator” support cases.

But there are a whole series of attendant privacy problems as well:

- As a mandatory authentication tool, they must be carried. But by carrying them, users can have their location and activity tracked.

- To ensure authentication integrity, they must be secured platform. This means corporate management software—and the vulnerability of all of the user’s personal data.

-

Their use as authenticators incentivizes theft. But when they’re stolen, access to all of the user’s personal data is stolen as well.

The verdict on mobile device MFA methods? Privacy fail.

Biometrics

Fingerprint scanners and face scanners are becoming ubiquitous. Modern technology has made them inexpensive and reasonably accurate, and they’re relatively intuitive to use and difficult to casually attack. All of that is good. But as factors for MFA authentication, there are some significant privacy problems:

Biometric MFA? Privacy fail.

Biometric MFA? Privacy fail.- Parts of users bodies must be carefully measured, down to the microscopic level, and this information stored and protected.

- If breached, what is often considered to be “canonical” identifying information about a user will be public forever, even though users can’t get a new fingerprint or a new face.

- Scanners can in fact be relatively easily fooled with false fingers or false faces, if a determined attack is executed.

-

All of this creates a strong incentive for bad actors to steal and distribute biometric data or markers, either through technical attacks or social engineering.

Worse, because traditional biometrics are naively seen as a kind of security panacea, users are being pressured to adopt them.

An increasing number of employment contracts are contingent on the acceptance of biometric identification techniques. Public policy has taken notice, for example in the Illinois Biometric Privacy Act (BIPA), and legal skirmishes are emerging.

The verdict on traditional biometric MFA methods? Privacy fail.

Hardware Tokens and Authentication Cards

Hardware tokens and authentication cards are the one conventional MFA solution that is relatively strong, out-of-band, and not particularly detrimental or even risky to privacy.

Token MFA? Practicality fail.

Token MFA? Practicality fail.If found or stolen, they don’t reveal anything about the user’s identity, body, activity, or personal biography. On the other hand, they come with a different set of problems that often makes them impractical for use in many production environments:

-

Users don’t have any particular attachment to or love for them, nor any use for them but during login workflows.

-

They are small, portable, and provided by employers (and thus free or inexpensive to users).

-

For these reasons, they are very frequently lost and very frequently forgotten.

-

They are themselves specialized hardware and they often require additional specialized hardware for deployment.

-

All of this leads to significant productivity, support, and frustration costs.

The verdict on hardware token and auth card MFA methods? Practicality and cost fail.

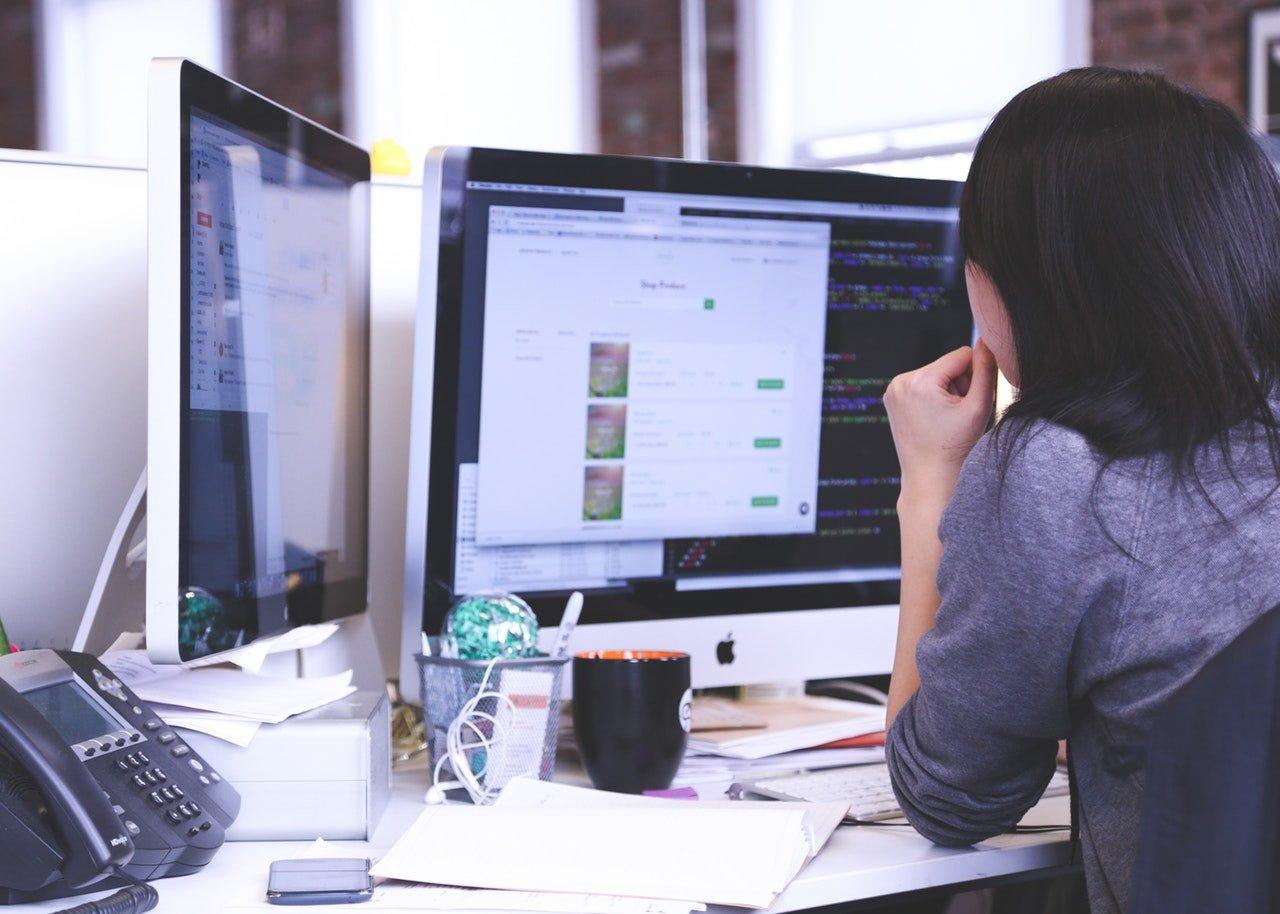

Enter Invisible MFA

So if shared secrets alone fail on privacy grounds, the most common MFA solutions also fail on privacy grounds, and the remaining MFA solutions are often too impractical to implement in all but the most security-conscious circumstances, where does that leave us?

Today we’re awash in an ocean of ambient data generated as each of us goes about our daily work. Every time a contemporary information economy worker sits down to work, multiple streams of numeric data can be generated by observing their behavior, environment, and context:

- Keyboard use, activity, and cadence

- Mouse or pointer use, activity, and cadence

- Geolocation data

- Network location data

-

Network packet data

These details don’t require extra hardware or memorized secrets—they just happen—and modern computing devices have the resources to observe and capture them as numeric data, in real time.

When paired with machine learning, this data reveals moment-by-moment micro-patterns in behavior, environment, and context that are as individually unique as a fingerprint and can be used for authentication.

Why Invisible Authentication Wins

Here’s where we deliver on what we promised in the title of this post. This method of authentication is both eminently practical and privacy-safe.

The algorithms in question don’t store what was being typed, for example—just keypress timings and how these timings vary between keys, or in particular environments, or at particular times of day.

They don’t what was being tapped or clicked—just angles and accelerations in pointer movement, kinetic tap or swipe characteristics, and so on.

If this data is lost or stolen, it’s just numeric data—it can’t be traced back to a particular name and biography, and it can’t be used to impersonate that person, even by the most skilled impersonator.

Better yet, since this data can be observed all the time, as users do regular work, authentication can also occur all the time, as users do regular work—not just at login prompts, though this technology can easily be used to validate username and password entry at login prompts as well.

So let’s recap the benefits. Invisible authentication:

- Requires no specialized hardware, no user training, no user habits, and no user memory

- Is rapidly and centrally deployed by developers, providers, or administrators

- Happens transparently, in real time

- Provides fingerprint-accurate identification

- Doesn’t store information about a user’s body, history, actions, choices, or preferences

- Generates numerical data that can’t be traced back to a real-world identity

-

Relies on patterned variation so small that even if stolen, the data can’t be used to impersonate someone else

The verdict on invisible MFA? It’s eminently practical and safeguards privacy in ways that traditional authentication methods can’t.

We’re proud at Plurilock™ to be on the vanguard of research and development in advanced authentication—and we believe that the future of authentication—and the future of privacy—are bright thanks to today’s invisible authentication technologies. ■